CYCLE FOR LARGE-SCALE ASSESSMENTS

- Introduction to the Taxonomy

- Background to the Taxonomy

- Levels of Guidance

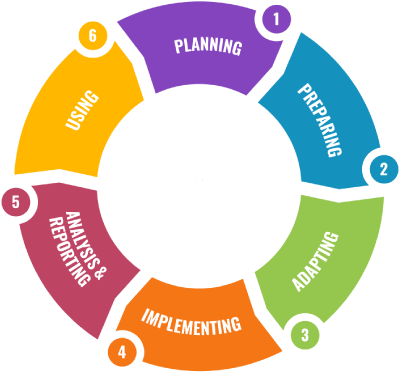

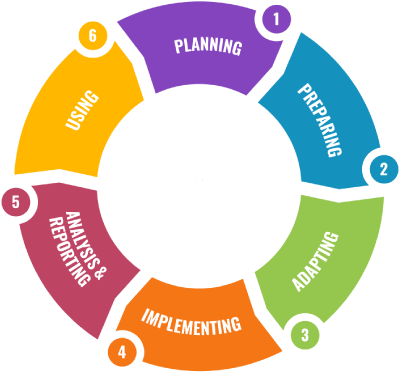

The following diagram shows the Assessment for Learning framework which covers 25 key assessment topics across six major phases. Guidance for each topic is provided at three levels: Introductory, Intermediate and Advanced. The circular diagram represents the typical order of assessment activities (clock wise) as well as the (ideally) cyclic nature of assessment: analysis and use of results from one cycle feed into the next cycle of planning, preparing, etc. However, in reality, some activities in the diagram may occur in parallel or in slightly different orders, and not all activities will be included in each assessment cycle. The intention is for the diagram to be comprehensive of all key assessment activities, but the relevance of individual components depends on the context and purpose of the assessment.

The structure of the taxonomy is based on: (i) ACER’s 14 key areas of a robust assessment program; (ii) The four phases (now extended to six) and 25 elements of the assessment taxonomy developed at UNICEF ROSA (iii) Subsequent discussions drawing on the expertise and experiences of the ACER India team.

Each assessment topic will have guidance at three levels of complexity, to cater to users with varying levels of expertise: Introductory, Intermediate and Advanced

- Selecting Types of

Assessments - Determining Policy Goals and

Issues - Ensuring Project Team and

Resources - Developing Technical

Standards - Creating an Assessment

Framework

The ‘planning’ phase concerns all of the elements that need to be in place before the development of assessment materials can take place.

OPEN THIS TOPIC

It is important to be able to distinguish between different types of assessment and to decide when they are, and are not, appropriate.

- Identify different purposes and types of assessment, who is responsible for each one and what data each form of assessment can yield for which group of stakeholders (including cross national assessments, national assessment, regional assessments, formal school exams and school-based assessment).

- Make informed selections of the appropriate type of assessment to suit different purposes and to inform particular areas of policy and practice, and be aware of the limitations of each type as well as the cost/benefit balance.

The ‘planning’ phase concerns all of the elements that need to be in place before the development of assessment materials can take place.

OPEN THIS TOPIC

At the start of an assessment programme it is necessary to determine what policy goals and issues it can help to inform.

- Identify policy issues of interest that can reasonably be expected to be informed by data from particular types of assessment, and consider those issues that are difficult to address using assessment data.

- Identify intended policy goals that can reasonably be expected to be informed by data from particular types of assessment, and identify those policy goals that are difficult to support using assessment data.

- Design an assessment strategy to prioritise key educational policy issues and goals and determine its parameters based on the identified priorities.

The ‘planning’ phase concerns all of the elements that need to be in place before the development of assessment materials can take place.

OPEN THIS TOPIC

Before starting an assessment programme, people with relevant skills and knowledge need to be available and the necessary resources need to be in place

- Identify the required skills and knowledge of an assessment cell or assessment project team, depending on the scale and type of assessment, and including the time requirements for each assessment activity.

- Undertake an exercise to identify the current capacity of team members and their capacity development requirements (including duration of training before achieving a level of expertise).

- Identify systems and resource requirements to undertake different types of assessment, the strengths and weaknesses of different approaches and their budget implications.

The ‘planning’ phase concerns all of the elements that need to be in place before the development of assessment materials can take place.

OPEN THIS TOPIC

It is important to agree the minimum technical requirements that need to be achieved before assessment data can be relied on.

- Understand the importance of defining technical standards in an assessment project, monitoring adherence to these and reporting against them.

- Consider key topics that need to be included in technical standards (with reference to technical standards available for large scale assessments).

- Identify key requirements for each topic, and consider example standards.

- Consider approaches to ensuring adherence to technical standards throughout an assessment cycle.

- Identify how to report against technical standards in a final assessment report or technical report.

The ‘planning’ phase concerns all of the elements that need to be in place before the development of assessment materials can take place.

OPEN THIS TOPIC

An assessment framework establishes the parameters for an assessment and is the basis for the development of assessment materials.

- Understand the purpose of an assessment framework and the ways in which it differs from a blueprint.

- Identify the key topics for inclusion in an assessment framework and the level of detail that is required for each one.

- Consider sources of information that are required to develop an assessment framework and how these may need to be adapted to inform the development of high quality assessment instruments.

- Look at definitions of key terms in available assessment frameworks from large scale assessments and identify how these may need to be adapted to suit a different context.

- Consider how to use the assessment framework in guiding assessment item development.

- Identify strategies to review assessment materials against an assessment framework to ensure consistency.

- Developing Cognitive Items

- Assessing 21st Century Skills

- Developing Contextual Questionnaires

- Controlling Language Quality

The ‘preparing’ phase concerns all of the elements that are required to develop and quality assure materials to collect cognitive and contextual assessment data.

OPEN THIS TOPIC

Cognitive items measure students’ skills and knowledge and developing them requires a high degree of skill in addition to layers of quality assurance.

- Identify the key steps required to develop high quality cognitive items, the approximate time required per item and the skills required of item writers.

- Consider best practice approaches to the quality assurance of assessment items (including panelling and cognitive testing) and how these can be implemented.

- Identify the role of a pilot study, relevant approaches to piloting, the timing of piloting in relation to main testing and the use of pilot data to inform improvements.

The ‘preparing’ phase concerns all of the elements that are required to develop and quality assure materials to collect cognitive and contextual assessment data.

OPEN THIS TOPIC

There is increasing interest in measuring students’ skills in areas such as team work, problem solving and critical thinking.

- Understand the current state of assessment activities on 21st century skills around the world and proven approaches to assessing different skills.

- Consider the advantages and disadvantages of assessing 21st century skills within cognitive skills assessment or separately.

- Identify key considerations to the assessment of 21st century skills and practical steps towards doing so.

The ‘preparing’ phase concerns all of the elements that are required to develop and quality assure materials to collect cognitive and contextual assessment data.

OPEN THIS TOPIC

Information on key background variables should be collected during the assessment programme to help the interpretation of findings.

- Identify why the collection of contextual data is important in helping to interpret assessment data.

- Consider what sort of contextual data and from whom is required to undertake data analysis that can inform policy issues and goals.

- Understand how to develop robust and efficient contextual data collection tools that align with the assessment framework and include rigorous quality assurance.

The ‘preparing’ phase concerns all of the elements that are required to develop and quality assure materials to collect cognitive and contextual assessment data.

OPEN THIS TOPIC

Whether assessments materials are developed in one or more languages, it is important to ensure that the language used is appropriate for the students being assessed.

- Understand the challenges of assessing in more than one language, or across variants of the same language.

- Identify approaches to developing general and item-specific translation and/or adaptation guidelines.

- Consider key quality assurance processes in adaptation and/or translation processes and how these can be monitored.

- Adapting Assessment for Young Children

- Adapting Assessments for Adolescents

- Adapting Assessments for Students with Special Needs

- Conducting Assessment with Vulnerable Populations

- Adapting Assessments for School Closures & Re-opening

The ‘planning’ phase concerns all of the elements that need to be in place before the development of assessment materials can take place.

OPEN THIS TOPIC

There is an increasing focus on assessing young children and this requires specific approaches to the development and implementation of assessment materials.

- Understand the key considerations in the assessment of young children and its importance in the context of Sustainable Development Goals.

- Identify common approaches to assessing young children, their advantages and disadvantages and the implication of different approaches for comparability of data.

- Consider ways to use the assessment of young children as a foundation for tracking individual student progress over time or monitoring the quality of early childhood development.

The ‘planning’ phase concerns all of the elements that need to be in place before the development of assessment materials can take place.

OPEN THIS TOPIC

As students come closer to the end of school, it is increasingly important to assess a range of skills such as employability and wellbeing

- Understand key considerations in the assessment of adolescents, including the measurement of employability skills.

- Identify approaches to the assessment of employability skills.

- Consider approaches to the assessment of life skills, wellbeing and resilience.

The ‘planning’ phase concerns all of the elements that need to be in place before the development of assessment materials can take place.

OPEN THIS TOPIC

If assessments instruments are to be used with students with special needs, they need to undergo appropriate adjustments to make them accessible.

- Identify key categories of special needs and common adaptations in assessment to support students in these categories.

- Understand good practice in supporting students with special needs to participate in assessment activities.

- Consider cost effective solutions to adapting assessment for students with special needs.

The ‘planning’ phase concerns all of the elements that need to be in place before the development of assessment materials can take place.

OPEN THIS TOPIC

When assessment is done with students from disadvantaged backgrounds, there are particular factors in design and implementation that need to be considered.

- Identify the key categories of vulnerable populations (including those who are homeless, refugees, from lower socio-economic strata and from minority groups.

- Consider the challenges posed in assessing vulnerable populations and adaptations that can be made.

- Understand the advantages and disadvantages of assessing students in their first language rather than the dominant language.

The ‘planning’ phase concerns all of the elements that need to be in place before the development of assessment materials can take place.

OPEN THIS TOPIC

Example Text.

- .

- .

- .

- Designing Assessment Instruments

- Designing Sampling

- Standarising field operations

The ‘implementing’ phase concerns all of the elements that are required to use assessment materials to collect data from target students.

OPEN THIS TOPIC

Once assessment items are developed they need to be carefully combined to create assessment instruments.

- Understand how to formulate a comprehensive test design to use efficient sample sizes, to ensure that any measures of change over time remain stable and to ensure that the content of the test is balanced.

- Ensure that the test design parameters are met by instruments.

- Construct test form linkages to enable comparison of the performance of individuals who complete different test forms.

The ‘implementing’ phase concerns all of the elements that are required to use assessment materials to collect data from target students.

OPEN THIS TOPIC

When selecting a sample of students to participate in an assessment programme it is important to ensure that a robust approach is used.

- Consider different approaches to sampling and their advantages and disadvantages.

- Understanding how to develop a comprehensive sampling frame enumerating the target population and including the use of over-sampling if required.

- Identify the use of clusters to assess manageable units such as schools or classes and understand the limitations such approaches can impose.

- Consider approaches to stratification of the sampling frame by areas of interest such as region, school location, gender of students, language of instruction and how to prioritise these.

The ‘implementing’ phase concerns all of the elements that are required to use assessment materials to collect data from target students.

OPEN THIS TOPIC

To be able to compare data it is important for students to be assessed in as similar conditions as possible.

- Develop a field operations / test administration manual to guide assessment administration and ensure that implementation is monitored for adherence.

- Ensure that independent, trained test administrators manage assessment coordination and administration to ensure that standardised test conditions are applied.

- Managing Assessment Data

- Classical Test Theory and Item Response Theory

- Data Analysis and Scaling

The ‘analysing’ phase concerns all of the activities involved between the collection of raw data from students and the development of reporting scales.

OPEN THIS TOPIC

To ensure that data is suitable for analysis, great care needs to be taken in how it is collected and prepared.

- Understand the importance of managing data and the impact that poor data management can have on reporting.

- Consider the advantages and disadvantages of different data capture techniques (manual data entry, scanning OMRs, automatic electronic marking) and the key quality assurance and elements that each require to ensure both data accuracy and data security.

- Identify the key steps required in preparing data for analysis, including data validation and cleaning processes to check for discrepancies, errors and outliers and, where necessary, enabling discrepant data to be checked at the source.

The ‘analysing’ phase concerns all of the activities involved between the collection of raw data from students and the development of reporting scales.

OPEN THIS TOPIC

CTT and IRT are the two main approaches to psychometric analysis and it is important to understand the differences between them.

- Identify differences between CTT and IRT and their strengths and weaknesses.

- Uses of CTT and IRT in an assessment program (e.g. pilot, building scales, item evaluation, test evaluation).

- Understand the skills and knowledge required of those who are required to undertake CTT and IRT and ways in which to enhance their capacity when needed.

The ‘analysing’ phase concerns all of the activities involved between the collection of raw data from students and the development of reporting scales.

OPEN THIS TOPIC

There are many different ways of analysing assessment data and the development of reporting scales is the most robust, but also most complex.

- Consider a range of approaches to analysing data, the contexts in which different ones are appropriate, their resource requirements and which outcomes can be achieved with which approaches.

- Identify common statistical approaches to data analysis that will ensure accurate and reliable results and generate estimates at a range of levels.

- Understand the application of sample weights to enable results to be compared across different strata (if used).

- Reporting and Dissemination

- Using Data Visualisation and Dashboards

- Informing Curricula and Resource Development

- Informing Teacher Training and Professional Learning

- Undertaking Secondary Analysis

- Informing Policy

The ‘using’ phase concerns all of the elements that are involved in making use of assessment data to inform improvements in education.

OPEN THIS TOPIC

Once data has been analysed, it is important to design reporting and dissemination strategies that meet the needs of different stakeholders.

- Identify the key stakeholders for reporting and dissemination of assessment results, the discrete needs that each group has and the type of assessment reporting that each group is likely to be able to understand.

- Consider how to develop a communication strategy that incorporates a range of approaches to cater for stakeholder diversity and different reporting formats.

- Understand common means of dissemination of assessment results and the advantages and disadvantages of each one, in addition to their likelihood of stimulating active dialogue around educational policy and practice.

The ‘using’ phase concerns all of the elements that are involved in making use of assessment data to inform improvements in education.

OPEN THIS TOPIC

Data visualisations and dashboards can be used to help users understand and engage with assessment findings.

- Consider the advantages of data visualisations and the types of analysis they enable.

- Identify a range of common approaches to the visualisation of data and their advantages and disadvantages.

- Consider the possibility of using different dashboards to illustrate assessment data and the purposes and the accessibility and simplicity of each one.

The ‘using’ phase concerns all of the elements that are involved in making use of assessment data to inform improvements in education.

OPEN THIS TOPIC

Assessment data can be used to provide insights to reform the design of curricula and the development of learning resources.

- Consider the sources of assessment data that can inform curriculum reform and examples of where this has been done.

- Consider the sources of assessment data that can inform the development of educational resources and examples of where this has been done.

- Consider the limitations of assessment data in informing curricula and resource development and alternative sources of insight.

The ‘using’ phase concerns all of the elements that are involved in making use of assessment data to inform improvements in education.

OPEN THIS TOPIC

Assessment data can be used to provide insights to the reform of teacher training and teaching practice.

- Consider the ways in which assessment data can be used to inform teaching practice, including through the assessment of teachers.

- Identify the ways in which assessment data can inform teacher training and consider examples of where this has been done.

- Consider the insights that assessment data can offer to informing teaching professional learning and consider examples of where this has been done.

The ‘using’ phase concerns all of the elements that are involved in making use of assessment data to inform improvements in education.

OPEN THIS TOPIC

Providing assessment data to researchers for the purpose of further analysis can generate useful insights into issues that are important to policy makers.

- Consider the value of undertaking secondary analysis of assessment data to inform education policy imperatives.

- Identify key issues that assessment data can shed light on and examples of how assessment data has been by education stakeholders.

- Understand approaches to secondary data analysis including making data sets available to researchers and the steps required to prepare data files for external analysis .

The ‘using’ phase concerns all of the elements that are involved in making use of assessment data to inform improvements in education.

OPEN THIS TOPIC

It is really important that assessment data is used to inform educational policy makings and to drive assessment reform.

- Understand how assessment data can be used to inform an analysis of policy options and to drive educational reform.

- Consider approaches to writing policy briefs and look at the use of assessment data at different stages of the policy cycle.

- Identify how to formulate policy research questions that secondary data analysis may be able to inform.